Helping Robots to Feel

Robotics are hot again! 2024 saw ±$6B in robot venture funding, after the drop from ±$7B in 2021. One of the plats du jour is VLAs, or Vision-Language-Action models, for robotic controls. They marked an inflection point because they added vision & language as additional modalities in addition to the prior approaches of robot actions only. In 2023, Google released RT-2, the first VLA widely publicised, with a control frequency of 5-10Hz. It reached 62% success on novel, unseen task vs its robot-episode-only transformer predecessor. In 2024, Physical Intelligence released π0, a generalist model with a VLM backbone that has a flow-matching policy at 50Hz. Earlier this year, Figure AI released Helix, using a two-prong system with a pre-trained VLM as “System 2” thinking, and a “System 1” faster model (transformer as well, but not VLM) that translates the latent semantic representations produced by S2 into continuous robot actions, claiming 200Hz control frequency.

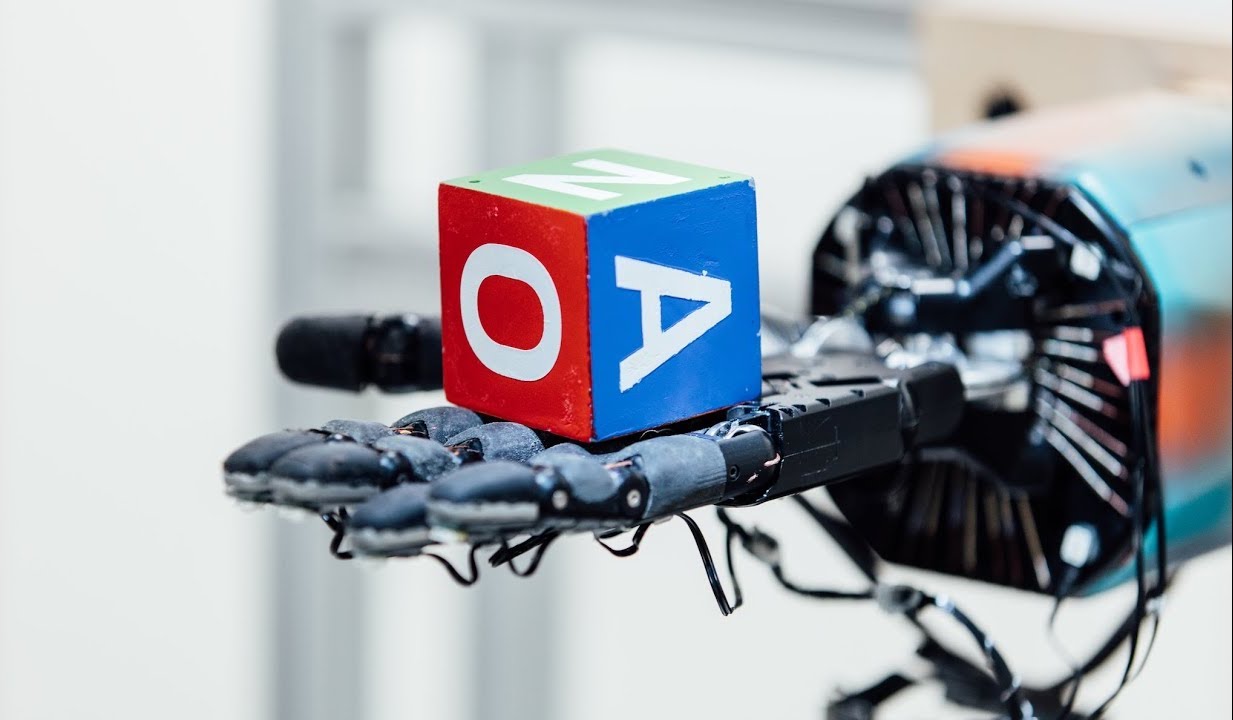

A third modality is emerging as another ingredient to throw into your transformer soup: force. (I am using force & tactile interchangeably but they are not the same. I’m referring to the superset of both.) But why do you need force/tactile as an additional modality?

Force data is an orthogonal addition to position data. Position data works for free-space motion, contact tasks require force regulation.

Manipulation fundamentally requires the manipulator to be mechanically coupled to the object being manipulated; the manipulator may not be treated as an isolated system.

- Hogan, Impedance Control: An Approach to Manipulation, 1985 (no I didn’t read all of it)

Hogan proposed a control strategy: Force = Stiffness x Displacement + Damping x Velocity + Mass x Acceleration. That’s three things you can’t capture with vision and positions alone.

Newton’s Second Law, F=ma, means that force is the cause and acceleration is the effect. Acceleration is the rate of change of velocity (dx/dt), which is the rate of change of position (x(t)). In other words, position is the double integral of force - integration suppresses noise, but that in turn loses detail. Force data also contains contact mechanics: fast-changing, transient events like slip-stick, contact onset, and loss of grip. Position data smooths over those events (see motion after the fact, not the cause), which means that force offers subtle, high-frequency cues that position data misses.

It’s not entirely new. The first version were devices like the Remote Center Compliance (RCC) in the late 1970s that had spring mechanisms to “wiggle” in order have compliance (giving way under force). Impedance control was introduced mid 1980s to have active compliance, where stiffness is adjusted by software. These led to early multi-fingered robotic hands like the JPL hand and the Utah/MIT hand which had rudimentary tactile sensors on the fingertips. But computational constraints meant real-time control of so many joints while also processing sensor feedback limited capability. In 1990s, force sensors burgeoned, but systems continued to suffer from instability. This continued until the 2010s with the advent of collaborative robots (cobots), where force-awareness was needed for safe deployment alongside human meatbags. Today, finally, we are seeing improvements in (i) sensor resolution, (ii) on-edge computational power, and (iii) software capabilities that might converge into solving one of the holy grails of robotics: dextrous manipulation.

There are papers surfacing that have combined the holy trinity into models that are seeing promise vis-a-vis models that only have vision. In 2023 in University of Michigan, Chen et al published Visuo-Tactile Transformers for Manipulation, learning a joint representation that saw better performance over visual-only models. In 2024, Covariant presented RFM-1, an 8B parameter trained on text, images, videos, robot actions, and a range of sensor readings from their production dataset (couldn’t find more technical details though, does anyone know how well this is doing?).

But more than a stew, it’s a sandwich. Similar to Figure’s approach, there seems to be some convergence towards a control hierarchy with at least 2 levels: a slower, larger one for planning and reasoning; and a faster, tactile-informed one for execution and quick responses to tactile feedback.

Why isn’t it ubiquitous already? Robotics as a whole suffers from a data bottleneck. Real world information - physics, textures, movement isn’t in your Common Crawl datasets. It is also embodiment-specific, and with a whole array of different configurations This is particularly pronounced in force/tactile domains where sensors are expensive.

- Force-torque sensors: a precision device that detects and quantifies force and torque applied to a given point. Torque is basically rotation. The most common type is 6-axis (3 force components - X, Y, Z and 3 torque components - roll, pitch, yaw). They cost anything from $1Ks to $10K.

- Tactile sensors: detecting local pressure, texture, slip, vibration. examples are -

- Optical based: when the gel lining is deformed against an object, the internal camera captures a detailed image of the deformation. eg GelSight DIGIT costs $350 a pop

- rubbery pads with embedded electrodes that change when squeezed

- fiber optics, e.g. a 2023 design where optical fibers are tied into knots and embedded into a pad. When forces are applied, the distortion changes how light travels through the fiber, and direction & magnitude can be measured by light output.

- Meta’s Digit 360 with over 8M+ sensing pixels (”taxels”, or arrays of tiny pressure-sensitive points).

Why so many things jammed into one artifact just to grasp a pen? Well, human hands have a bajillion different sensors that feed into our subconscious calculus for movement. For example:

- Pacinian corpuscle is a cap on a nerve ending, deeper in the dermis. They are fast-adapting (responds to change) that detect vibration at 60-400Hz, such as slip onset.

- Meissner corpuscles are also little nerve-ending-caps, but more surface level. They’re also fast-adapting, and detect things like flutter, grip control, low-force contact at 10-50Hz.

- Merkel cells/disks are at the epidermal-dermal boundary at the base of your fingerprint ridges. They are slow-adapting (fires continuously with sustained pressure) that senses edges, shapes, and textures.

- Ruffini endings are deeper in the dermis, slow-adapting, and sense skin stretch and joint movement - important for proprioception (kinesthetic awareness) and hand configuration.

- Tendons sense tension, and muscles sense stretch and length that also enable proprioception.

(These are completely unnecessary for the journeyman technologist to understand but I thought it was cool!)

How are people approaching this data bottleneck? I’ve met companies doing some version of the below:

| Approach | Pros | Cons | Examples |

|---|---|---|---|

| Teleoperations: learning from humans wearing some haptic feedback mechanism to map sensor data to action decisions, collect data to build models | Data specific to a single task; if you find commercial use cases for the teleops, you can fund your data collection without relying on low-context-bro-vc-money. Real-world uses also provide higher quality distributions that are hard to discover through random exploration. | Any gap in timing or resolution to the operator can lead to embodiment behavior that doesn’t generalize well (e.g. operators may grip harder because they can’t feel well, so the models will learn over-gripping). | Feel the Force: Contact-Driven Learning from Humans (2025) |

| Reinforcement learning: simulation tasks in an environment | Can run millions of runs cheaply, and new simulators like MuJoCo have sophisticated contact dynamics | sim-2-real transfer challenge, as contact dynamics are complicated to model well; could lead to reward hacking (dense rewards) or exploration problems (sparse rewards) | AnyRotate: Gravity-Invariant In-Hand Object Rotation with Sim-to-Real Touch (2024). Also, OpenAI’s Dactyl cube manipulation! |

| Reinforcement learning in the real world | Learns actual physics and sensor characteristics, naturally handles real-world stochasticity | Sample inefficient, slow, and expensive | Learning Tactile Insertion in the Real World (2024) |

| Imitation learning: learning from expert demonstrations through behavioral cloning | Stays close to demonstrated trajectories reducing dangerous exploration | Experts model “best” behavior, which may not cover edge cases; and on the flip side, performance ceiling limited by demonstrator skill | SRT-H: A Hierarchical Framework for Autonomous Surgery via Language Conditioned Imitation Learning (2025) (a few weeks ago, first autonomous gallbladder removal robotic surgery) |

Or, avoiding it altogether either with surgical correction via mechanistic interpretation (especially since VLAs are smaller than LLMs and are less expensive to analyze). The challenge I see with that approach as a business is that no closed source model provider would want to give you free-reign access to their model weights for you to do your interpretation of the mechanistics; it accrues more value to be part of the toolkit a foundational model developer wields. There’s also some work being done around universal representation of intelligence showing up in transformer-esque models that could perhaps bypass dissection for the same purpose. That’s a post for later!

There is another angle to reinforcement learning: since it does not rely on data in the traditional sense, its loss curve doesn’t drag it down into the realm of human understanding. Why should robots be humanoid? Why should they be bound by the same physical constraints, sensory modalities, same control hierarchies as us? Our current built environment sprang up much faster than our human bodies could naturally adapt to it. What would a physical entity natively adapted for this current world look like? Would it still have joints, sensors, limbs the way we do? Perhaps not!

Holler if you know other interesting approaches!

Comments